Don’t let AI become a cost center.

As organizations increasingly adopt AI solutions, the challenge isn’t just about choosing the right models—it’s about deploying them efficiently. Azure AI Foundry offers a deployment framework that, when properly balanced, can dramatically improve both your bottom line and operational performance.

The Three Pillars of AI Foundry Deployments

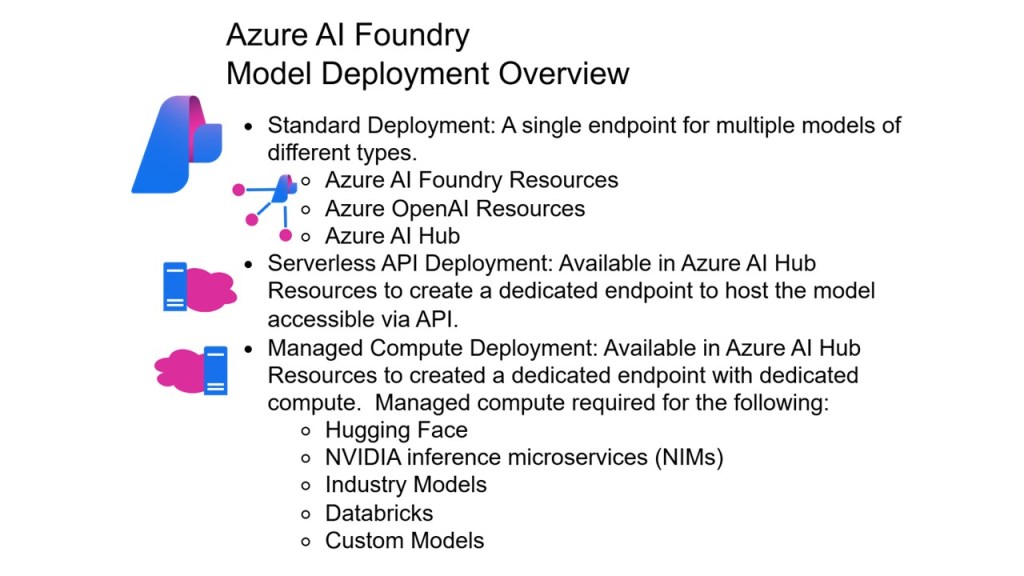

Azure AI Foundry provides three primary deployment options, each designed for specific use cases:

Standard Deployments serve as your go-to option for most scenarios, offering the best model availability for fast deployments and high usage limits. These pay-per-call deployments provide flexibility and quick startup times, making them ideal for development and moderate production workloads.

Provisioned Deployments represent the premium tier, providing reserved capacity for high and predictable throughput requirements. Unlike standard deployments, usage limits don’t apply to provisioned compute, giving you guaranteed performance for mission-critical applications.

Batch Deployment Workloads emerge as the cost-efficiency champion, offering up to 50% cost savings compared to standard deployments. With a 24-hour target turnaround for asynchronous processing, batch deployments excel at handling large-scale data processing tasks that don’t require immediate results.

Cost Optimization: More Than Just Choosing the Cheapest Option

The path to cost optimization isn’t simply selecting the lowest-priced deployment type—it’s about strategic alignment between workload characteristics and deployment capabilities.

Batch workloads present the most compelling cost opportunity. When your use cases can tolerate delayed processing—such as content generation, document analysis, or large-scale data transformation—batch deployments deliver significant cost reductions while maintaining processing quality. The key is identifying which workloads can shift from real-time to batch processing without impacting business outcomes.

Right-sized deployments represent another crucial cost optimization strategy. Many organizations over-provision their AI infrastructure, paying for capacity they rarely use. By carefully analyzing actual usage patterns and matching them to appropriate deployment types, you can eliminate waste while ensuring adequate performance.

Volume and Scale: Building for Growth

Different deployment types excel at different volume patterns, and understanding these characteristics is crucial for scalable architecture.

Standard workloads shine in scenarios requiring flexibility and burst capacity. They handle low to medium volume workloads exceptionally well, particularly those with high burst rates. This makes them perfect for applications with unpredictable traffic patterns or seasonal variations.

Provisioned workloads become essential as your AI operations mature and volume becomes predictable. By reserving capacity, you eliminate the latency variability that can occur with standard deployments under heavy load. This consistency is crucial for customer-facing applications where performance directly impacts user experience.

Data zone options add another layer of sophistication, allowing you to maintain data processing within specified geographic zones while still benefiting from Azure’s global infrastructure. This capability is particularly valuable for organizations with strict data residency requirements.

Process Duration: Matching Deployment to Timeline

The temporal requirements of your AI workloads should significantly influence your deployment strategy.

Real-time processing demands immediate response capabilities, making standard and provisioned deployments your primary options. These deployments excel at interactive applications, customer service chatbots, and real-time analytics where delays aren’t acceptable.

Batch processing transforms how you approach large-scale AI tasks. With 24-hour target turnaround times, batch deployments are perfect for content generation at scale, document review and summarization, and extensive data analysis projects. The key insight is recognizing that not all AI workloads require immediate results.

Burst handling capabilities of standard deployments provide crucial flexibility for applications with unpredictable load patterns. This makes them ideal for applications that experience sudden traffic spikes or seasonal variations.

Operational Excellence Through Strategic Balance

The real power of Azure AI Foundry emerges when you combine different deployment types within a cohesive architecture.

Latency Management becomes predictable with provisioned deployments for critical paths while using standard deployments for less critical functions. This hybrid approach optimizes both performance and cost.

Resource Efficiency improves through global deployments that leverage Azure’s infrastructure to dynamically route traffic to the best available data center. This intelligent routing improves overall resource utilization and reduces the need for manual load balancing.

Scalability increases when you can mix deployment types to handle different workload patterns within the same solution architecture. Your architecture becomes more resilient and adaptable to changing business needs.

Compliance and Data Residency requirements are met through data zone deployments, which provide a middle ground between global scale and regional data processing requirements.

Implementation Strategy: From Theory to Practice

Successful deployment optimization requires a methodical approach to understanding your workload patterns and matching them to appropriate deployment types.

Start by analyzing your current AI workloads and categorizing them by urgency, volume, and processing requirements. Identify which processes can move to batch processing for immediate cost savings. Reserve provisioned capacity for your most critical, high-volume applications where performance consistency is paramount.

Consider implementing a tiered approach: use global standard deployments for general workloads, provisioned deployments for critical high-volume applications, and batch processing for large-scale data processing tasks that don’t require immediate results.

Monitor and adjust your deployment mix regularly. As your AI operations mature and usage patterns become clearer, you can optimize further by shifting workloads between deployment types based on actual performance data.

The Bottom Line

Optimizing Azure AI Foundry model deployments isn’t just about reducing costs—it’s about building a sustainable, scalable AI infrastructure that grows with your business. By strategically balancing deployment types across cost, volume, scale, and process duration considerations, you ensure you’re not overpaying for unused capacity while maintaining the performance and reliability your applications require.

The organizations that master this balance will find themselves with a significant competitive advantage: AI operations that are both cost-effective and capable of scaling to meet future demands. In the rapidly evolving AI landscape, this operational excellence can be the difference between AI initiatives that thrive and those that become unsustainable cost centers.

Ready to optimize your AI Foundry deployments? Start by auditing your current workloads and identifying quick wins through batch processing migration and right-sized deployment selection.

Leave a comment